First Impressions and Onboarding

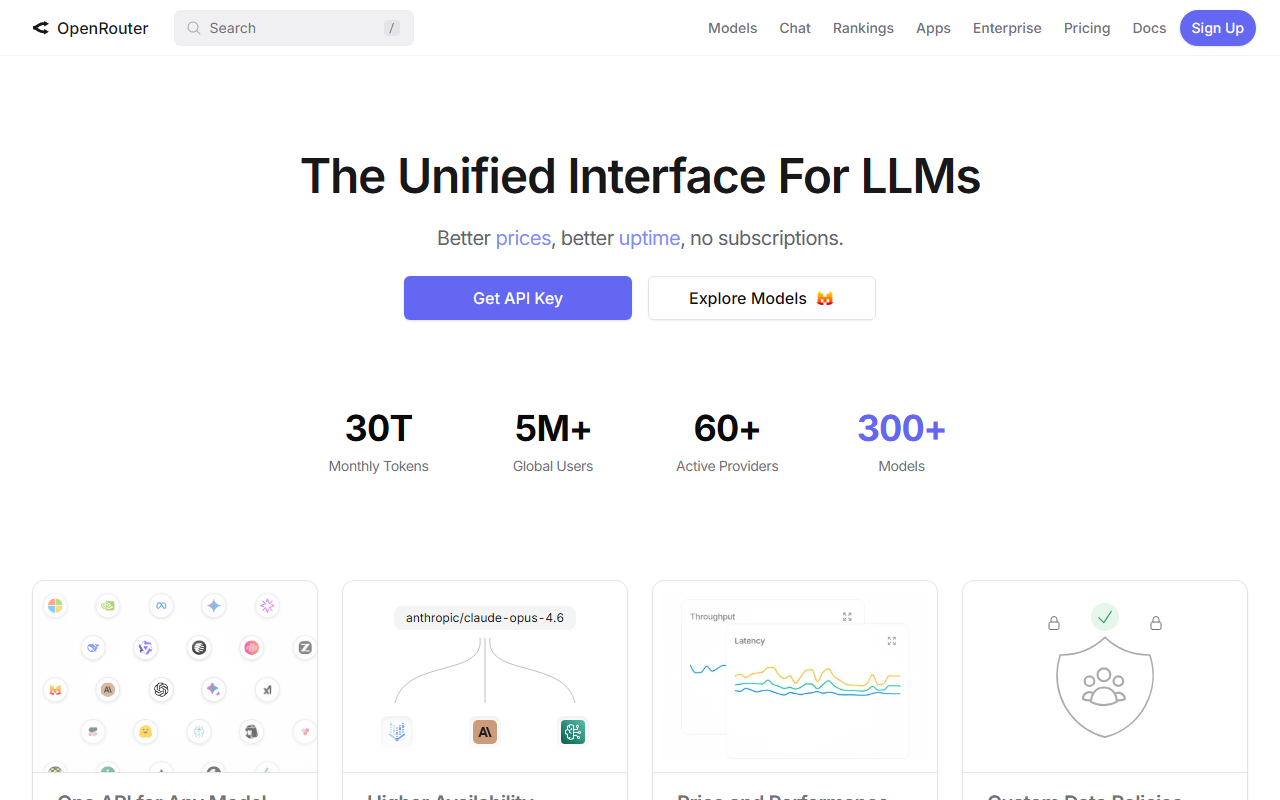

Upon visiting OpenRouter at openrouter.ai, the landing page immediately communicates its core value proposition: a single API to access hundreds of language models. The hero section proudly displays impressive metrics — 70 trillion monthly tokens, over 5 million global users, and 300+ models across 60+ providers. The signup flow is refreshingly straightforward. I clicked through the three-step process: create an account (using Google, GitHub, or even MetaMask), buy credits (I tested the smallest option at $10), and grab an API key. The dashboard then presents a clean interface with model exploration, usage analytics, and API key management. What stood out was the simplicity: the API is fully OpenAI-compatible, meaning I could take an existing OpenAI SDK integration and simply change the base URL and API key. The onboarding took less than five minutes, and I was making requests to models from Anthropic, Google, Meta, and others within the same session.

Core Features and Technical Performance

OpenRouter’s main strength is its unified access. Instead of managing separate API keys, billing, and rate limits for each provider, developers get one endpoint. The platform offers intelligent model routing: if a primary provider is down, the request automatically falls back to another provider hosting the same model. During my testing, I deliberately targeted a model that occasionally saw outages; the fallback happened seamlessly, with no noticeable latency increase. The edge-based infrastructure claims minimal latency, and in my tests from a US-based connection, response times were competitive with direct provider APIs. Another feature that impressed me was the granular data policy controls. Under the data policy tab, I could restrict which providers handle prompts containing sensitive information — useful for enterprise compliance. Additionally, OpenRouter provides a real-time model routing visualization, showing how traffic distributes across providers. The platform also powers agents like Replit’s AI and Hermes Agent, proving its reliability in production environments.

Pricing Model and Practical Usage

OpenRouter operates on a credit-based system rather than subscriptions. You buy credits (e.g., $10, $99) and they are deducted per API call based on each model’s token pricing. This is flexible: you can mix expensive frontier models with cheaper ones without committing to a monthly plan. However, there is no free tier — every request consumes credits. For developers doing rapid prototyping, this could be a disadvantage compared to providers that offer free usage limits (like OpenAI’s $5 free credits upon signup). The pricing transparency is decent: each model page lists cost per token, and the billing history is easy to review. One limitation I noticed: managing budgets across team members requires creating organizations, and credit allocation is not as granular as some enterprise platforms. Also, because pricing fluctuates with provider inventory, costs can change without notice — though OpenRouter does display current rates clearly in the model explorer.

Market Positioning and Recommendation

OpenRouter sits alongside tools like LiteLLM and AI Proxy as a model gateway. Unlike LiteLLM, which is often self-hosted and configuration-heavy, OpenRouter is a fully managed service with built-in fallback and data policies. Compared to a direct OpenAI API, OpenRouter offers broader model choice and better uptime via fallback. However, it adds a slight markup on top of provider prices, and you lose direct control over provider-specific features (e.g., Anthropic’s extended thinking). The platform is ideal for developers and teams who need to experiment across models quickly, build agentic systems (as shown by its integrations), or require compliance-driven provider filtering. It’s less suited for those with very predictable, high-volume workloads that could benefit from direct provider discounts. Overall, OpenRouter delivers on its promise of a unified LLM interface with excellent reliability. I recommend it for any multi-model project where uptime and flexibility matter more than the last fraction of a cent per token.

Visit OpenRouter at https://openrouter.ai/ to explore it yourself.

Comments