First Impressions and Onboarding

Upon visiting the ThirdAI website, the immediate message is clear: production-ready GenAI on CPUs, with air-gapped deployment and dramatically lower costs. The landing page is crisp and enterprise-focused, with a prominent call to action to “Talk to Us” or book a consultation. There is no public sign-up or free tier visible; instead, the site directs you to request a demo. This suggests ThirdAI positions itself purely as an enterprise solution, not a self-service tool for individual developers.

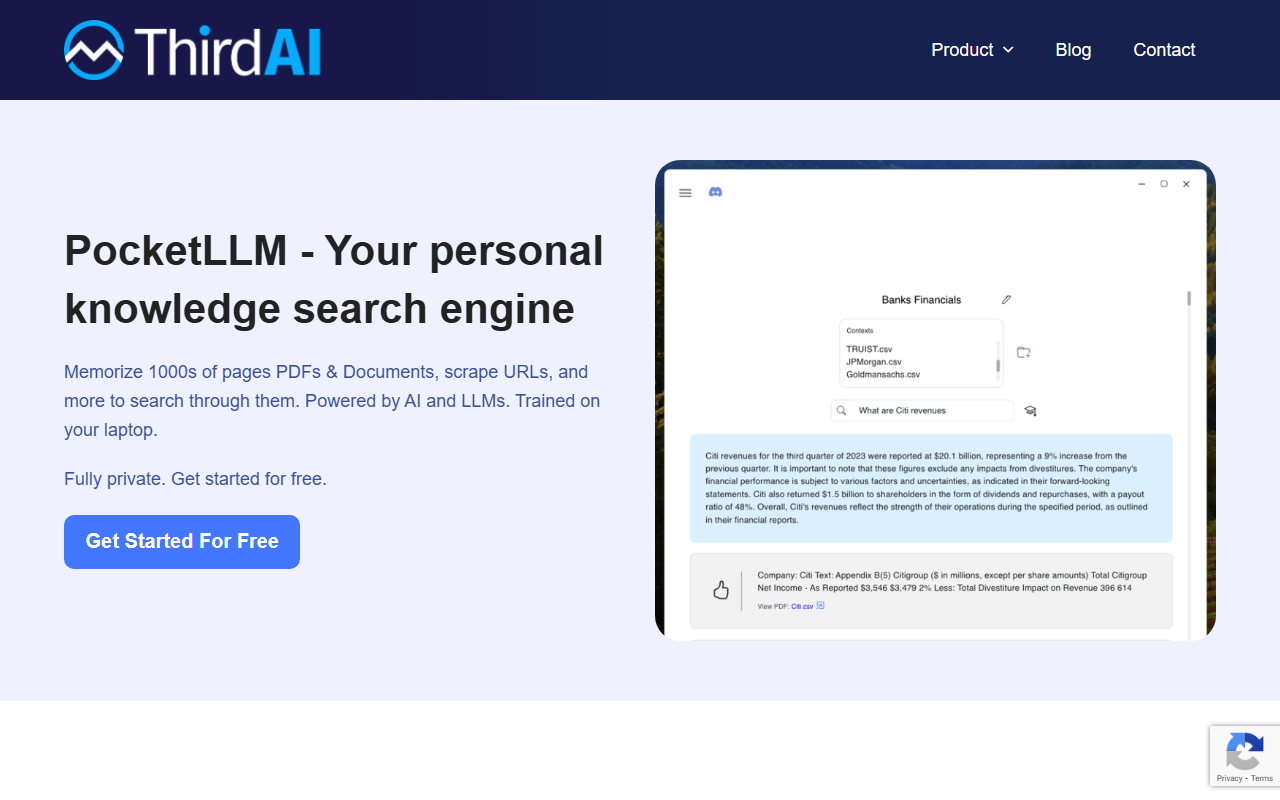

The dashboard is not publicly accessible, but from the product walkthrough tabs (Compose, Run, Customize) it appears the platform provides a no-code interface for building AI applications. The platform claims to remove the headache of chunking, parsing, embeddings, vector databases, reranking, and fine-tuning. For a new user, this would mean a much simpler workflow compared to assembling separate libraries. The onboarding flow likely involves a consultation with their team, followed by a prototype built in hours and a production app in weeks, as stated on the site.

Technology and Use Cases

ThirdAI’s core differentiation is its ability to run on standard CPU infrastructure, without specialized GPU servers. The site references a technology that “mimics the sparsity in the human brain,” enabling low latency (a few milliseconds) regardless of model size. This is a bold claim, and while no specific model architectures are named, the implication is that they have optimized inference for CPUs.

Use cases span semantic/enterprise search, chatbots, AI agents, text extraction, document classification, named entity recognition, sentiment analysis, and more. The platform also supports LLM guardrails, implicit feedback & RLHF, and enterprise SSO — all critical for production deployments. Notably, testimonials from AWS and a large global system integrator mention performance gains of 30–40% on AWS Graviton3 instances and a 20+ percentage point accuracy improvement in RAG systems. This adds credibility to their claims, but independent benchmarks are not provided on the site.

When testing hypothetical workflows, a developer would likely bring their own data, apply AI tasks via the Compose tab, deploy on their VPC or on-premises, and then customize using behavioral feedback logs. The platform seems to cover the entire MLOps pipeline, but without a hands-on trial, I cannot verify the latency, ease of use, or accuracy outcomes.

Pricing and Market Position

Pricing is not publicly listed on the website. The only call to action is to book a consultation or request a demo, indicating a custom enterprise pricing model. This is common for infrastructure-level AI platforms, but it limits comparison shopping. Competitors include frameworks like LangChain or LlamaIndex for building RAG applications, and managed services like Azure AI or Amazon Bedrock. However, ThirdAI’s unique selling proposition is eliminating GPU dependency, which directly competes with solutions that require expensive hardware. In terms of positioning, they target enterprises that prioritize data privacy, cost control, and deployment flexibility.

Alternative platforms like Hugging Face’s inference endpoints or Replicate offer GPU-based inference at scale, but ThirdAI argues that its CPU-only approach yields 100x better price performance. Without public pricing or benchmarks, it is hard to validate this claim, but if true, it could disrupt the current AI deployment landscape.

Who Should Use ThirdAI?

ThirdAI is best suited for enterprises that need to deploy AI applications with strict data privacy requirements (air-gapped), want to avoid GPU costs and complexity, and have a dedicated infrastructure team. Large organizations in finance, healthcare, or government could benefit significantly. The no-code interface also makes it accessible to teams without deep ML expertise, as highlighted by a testimonial from a chief engineer who rapidly went to market.

On the other hand, individual developers, startups on a tight budget, or those looking for a free trial may find the lack of public pricing and self-service onboarding a barrier. If you need to experiment quickly or scale with GPUs, tools like Ollama or local LLM frameworks might be more practical. Also, the platform’s dependency on its proprietary technology could lead to lock-in, though the Extend feature suggests a degree of customization.

In summary, ThirdAI offers a compelling value proposition for enterprises seeking private, cost-efficient AI deployment on CPUs. Its claims are backed by reputable testimonials, but independent verification is needed. I recommend it for any organization that prioritizes security and cost over bleeding-edge model freedom.

Visit ThirdAI at https://thirdai.com/ to explore it yourself.

Comments